Why Can't a Model with an IQ of 160 Do the Job of a Marketing Director?

Large models face challenges in adapting to enterprise business, and Tezign has launched the GEA four-layer architecture intelligent system, focusing on context to achieve multiple capabilities leap for enterprise AI and deliver tangible results.

“Today, every company in the world needs an intelligent system strategy. This is the new computing.”

At the recently concluded GTC 2026 conference, NVIDIA CEO Jensen Huang made a statement that inevitably caused anxiety among traditional enterprises and software vendors.

Huang even bluntly stated: the future compensation package for engineers will become “salary + Token,” with Tokens becoming the core asset of the AI era.

Old Huang's judgment is not surprising.

If we pay a little attention, we will find that many companies around us have invested in AI. From the initial card hoarding to later all-in-one machines, and then to comprehensive solutions, AI has indeed increasingly entered the core processes of enterprise business.

But the problem is that the returns on these investments seem not as promising as imagined.

Sales use it to polish template emails, and operations use it to summarize meeting minutes. As for the most critical business metrics for enterprises—conversion rates, growth bottlenecks, etc., they remain almost unchanged.

This is an extremely paradoxical situation: AI, which seems omnipotent on the consumer side, becomes out of place when it enters the complex business flows on the B2B side.

Where exactly does the problem lie?

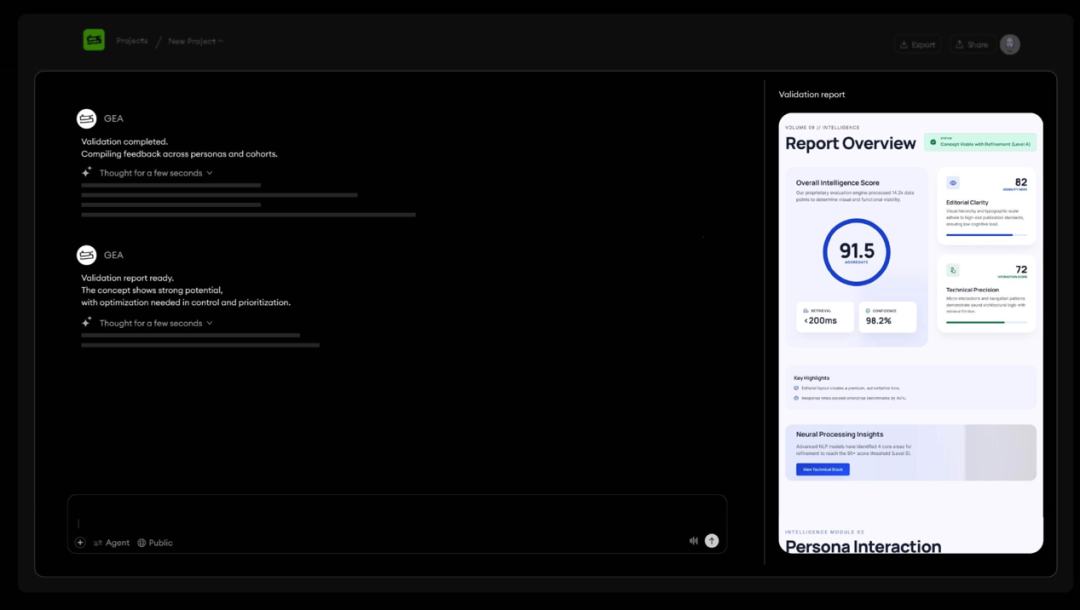

On March 27, the enterprise-focused Agentic AI company Tezign officially launched the enterprise-level intelligent system designed for enterprises: GEA (Generative Enterprise Agent).

In the view of the “Chief Digital Officer,” Tezign's launch not only responds to Huang's intelligent system strategy assertion but also points enterprises, which are trapped in model anxiety, towards a completely different path to breakthrough.

1. The Conflict Between AI and Real Business

To understand the awkward situation of current enterprise AI, we must first recognize two fundamental industry conflicts.

The first is the homogenization trap of large models.

Nowadays, everyone is focused on the foundational large models: whether the parameters are larger, whether the reasoning chains are longer, whether API calls are cheaper.

But in this crazy competition, the vast majority of corporate executives overlook a fact: when everyone can easily access the same top models, the model itself is no longer a moat for the enterprise.

Whether it’s GPT, Claude, Gemini, or DeepSeek, Qianwen, Doubao, the interfaces you can pay for are also available to your competitors.

Tezign's founder and CEO Fan Ling stated at the launch: large models are rapidly becoming public infrastructure like electricity. And in the brutal commercial competition, no company will win the market because “our electricity is better.”

If you only feed the same model with publicly available information from the internet, it will always give you a generic answer that applies universally.

This is the first problem.

The second problem is that the inherent convergence logic of large models contradicts the demands of real business.

This is a deeper conflict.

“More accurate answers” have always been the goal pursued by large models.

Faced with a complex problem, conventional reasoning models will narrow down the range of possibilities step by step based on their vast pre-training data until they ultimately “converge” on that one correct standard answer.

This is a characteristic of large models.

Undeniably, this characteristic is perfect for solving deterministic problems. Therefore, we often see reports of certain new models winning math Olympiad gold medals, achieving high scores in college entrance exams, or easily passing judicial examinations.

However, the real business world is never as simple as solving problems.

In highly uncertain business decisions, the core value of the problem often lies not in quickly finding a so-called convergent answer, but in how many innovative possibilities the decision-maker can see.

Suppose you are the CMO of a leading consumer brand and need to develop a communication strategy for a major new product launch.

If you seek help from a conventional reasoning model, it will certainly derive a logically sound and extremely reasonable optimal plan based on past massive marketing historical data and so-called best practices. This plan will be very safe but also destined to be extremely mediocre.

This is precisely the most common problem enterprises face when using AI.

In real business battles, disruptive victories often come from breaking conventions.

What we need to question is: what if we don’t do traditional hard advertising? What if we switch the target audience from the traditional A group to the overlooked B group?

These explorative and disruptive perspectives are precisely the divergent thinking that business needs.

Therefore, Tezign keenly points out that beyond the convergent models that pursue a single answer, enterprise-level AI urgently needs a “divergent-first” reasoning system.

Before seeking certain execution answers, AI must first exhaust various strategic possibilities.

2. What is the Core of Enterprise AI?

Understanding the above two conflicts allows us to grasp what core pain points Tezign's GEA is addressing.

GEA (Generative Enterprise Agent) is the enterprise-level intelligent system designed by Tezign for enterprises: it is not a single-point AI tool but a system that operates around business objectives, understands enterprise context, makes reasoning decisions, calls skills to execute tasks, and continuously optimizes results.

To put it simply: in the enterprise context, the AI problem is no longer just “can it generate content,” but can it truly enter business processes, understand organizational context, participate in judgments, and be accountable for results.

Tezign refers to this architecture as GEA.

The core logic of GEA is: based on the enterprise context system System of Context, it unifies the orchestration of reasoning capabilities, agents, and skills, transforming general model capabilities into an enterprise-level intelligent system that can be embedded in business, sustainably evolve, and be accountable for results.

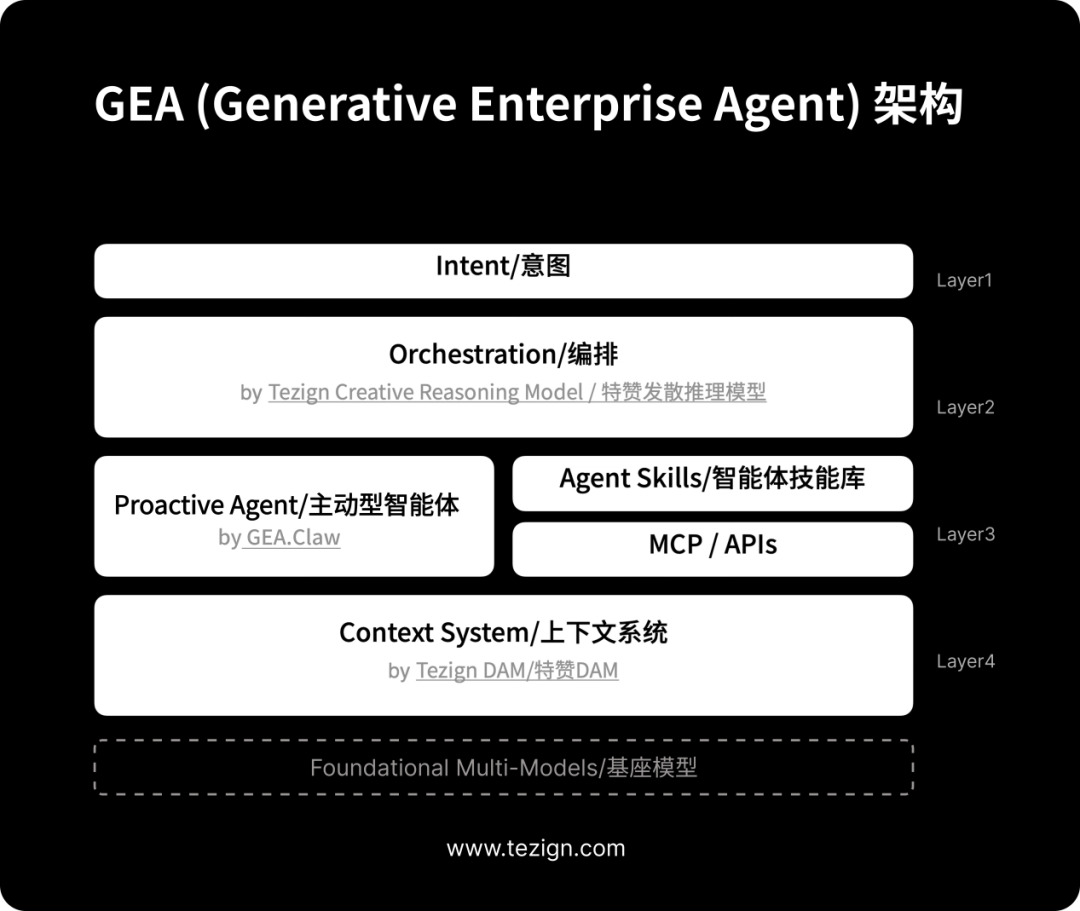

According to Tezign's official definition, this GEA architecture consists of four core layers:

First Layer: Intent Layer—starting from business objectives, discarding cumbersome prompts.

In the past, the threshold for using AI was that you had to be an excellent “prompt engineer.” But in the GEA architecture, the starting point has completely changed. It is no longer cold technical instructions but the most straightforward business objectives of the enterprise.

Executives or business line leaders do not need to ponder how to write prompts; they only need to issue business intentions in natural language: “Help me find growth opportunities in the Southeast Asian market for the next quarter,” or “Prepare three different communication strategies for this new product launch.”

After that, the system will automatically convert these macro business intentions into structured task paths, allowing AI to truly operate continuously around business objectives.

Second Layer: Orchestration Layer—the enterprise brain that can think divergently.

Once the intent is issued, the task is taken over by Tezign's self-developed divergent reasoning model, the Creative Reasoning Model.

It will do two things:

First, it will perform divergent reasoning, breaking down complex business intentions into multiple possible execution paths and rigorously evaluating the potential business value of each path.

Second, it will orchestrate models. It’s important to know that the foundational model landscape is changing every month; some models excel at logical reasoning, some at generating high-quality images, and others at handling massive data tables.

Tezign's divergent reasoning model acts like a commander, automatically determining what specific capabilities each sub-task requires after decomposition, and then automatically calling the most suitable one from over 30 foundational models to execute.

Third Layer: Agent Skills Layer—the “hands and feet” that truly get the work done.

After thinking is complete, there must be strong execution capability. At this layer, GEA has its exclusive “executor”—GEA Claw. It is the execution infrastructure for enterprise-level intelligent agents to operate within real business processes.

Reportedly, GEA Claw can call upon over 400 modular Agent Skills. These skills cover all aspects of enterprise operations, from content generation, data analysis, consumer insights, to creative evaluation, brand consistency compliance checks, and more.

More importantly, it adopts a proactive intelligent agent mechanism that can continuously monitor internal data changes and external competitive environments 24/7. Once it detects data anomalies or new actions from competitors, it will proactively propose strategic suggestions within the established authorization boundaries, or even directly trigger the next steps.

Fourth Layer: Context Layer—the only moat for enterprise AI.

In this wave of AI, the enterprise's Context has been overlooked by too many people, but it is actually the core barrier of enterprise AI.

As mentioned earlier, foundational models are public infrastructure, and everyone is the same. So what exactly is the moat for enterprises in the AI era? Tezign's answer is Context.

Because models generate intelligence, only context can generate real business value. When you input the unique context of the enterprise into the model, what it outputs will be an exclusive answer that only your enterprise can obtain.

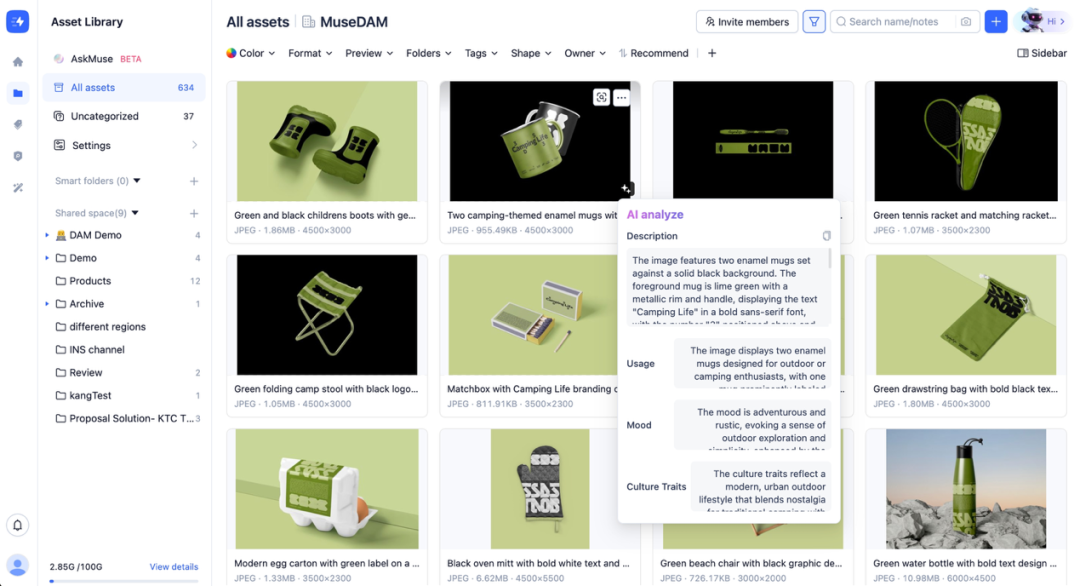

To support this strategy, Tezign also launched the Context System (supported by Tezign DAM). It is not only an upgraded digital asset management system but also the source of enterprise-level context.

In the past, the brand tone documents, massive marketing visual materials, complete decision-making tracks for product development, reviews of past campaigns, and even user portraits of target users were all disorganized and lying in various departments' cloud drives or folders in unstructured data form.

Now, when this content enters the Context System, the system's built-in local model will automatically recognize and label it, combining it with the enterprise's custom tagging system to instantly construct a structured, deeply semantically related “living context.”

It’s like installing an “external brain” for the enterprise, capable of not only remembering but also understanding.

Through its powerful context retrieval capabilities, the enterprise essentially has a dedicated internal Perplexity.

When you ask, “What were the most effective visual styles we used in the European market last year?” it can not only answer accurately but also instantly retrieve compliant and usable related marketing materials directly in front of you, solving the pain points of “finding answers + finding materials” in one go.

Moreover, as the lifeblood data asset of the enterprise, the Context System was designed from the outset to establish an absolute bottom line for enterprise-level security.

It employs strict permission controls and a “progressive disclosure” mechanism, only delivering the slice of information that is truly needed for the current task to the model; what should be given is given, and what should not be given is absolutely not provided, fundamentally eliminating the risk of corporate secrets leaking.

3. The Second Half of AI Has Just Begun

Talking on paper can never impress demanding B2B clients. Tezign's GEA system has delivered an extremely impressive performance in real business testing.

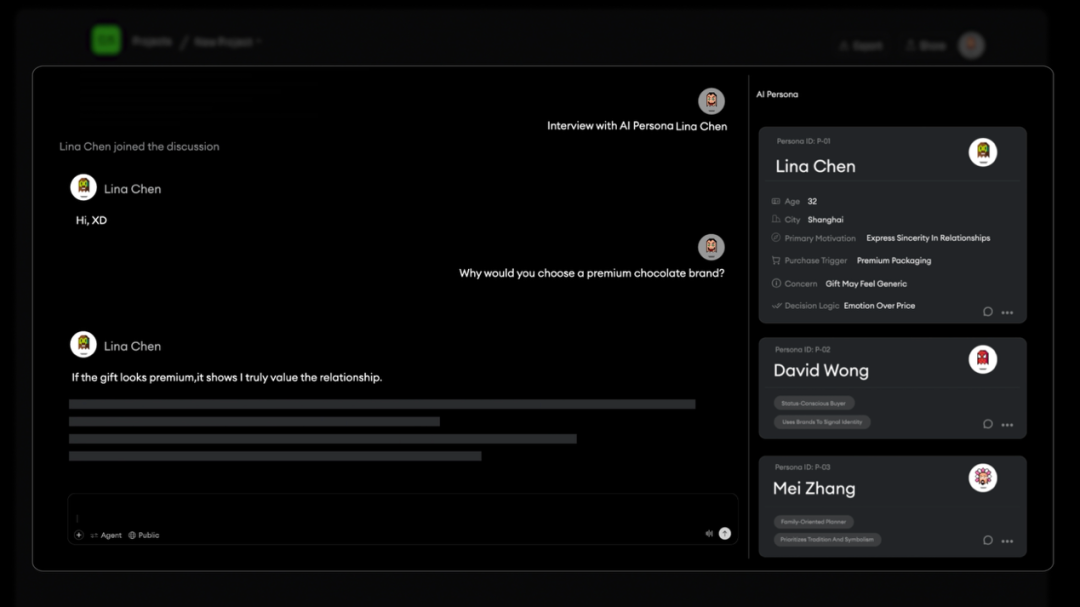

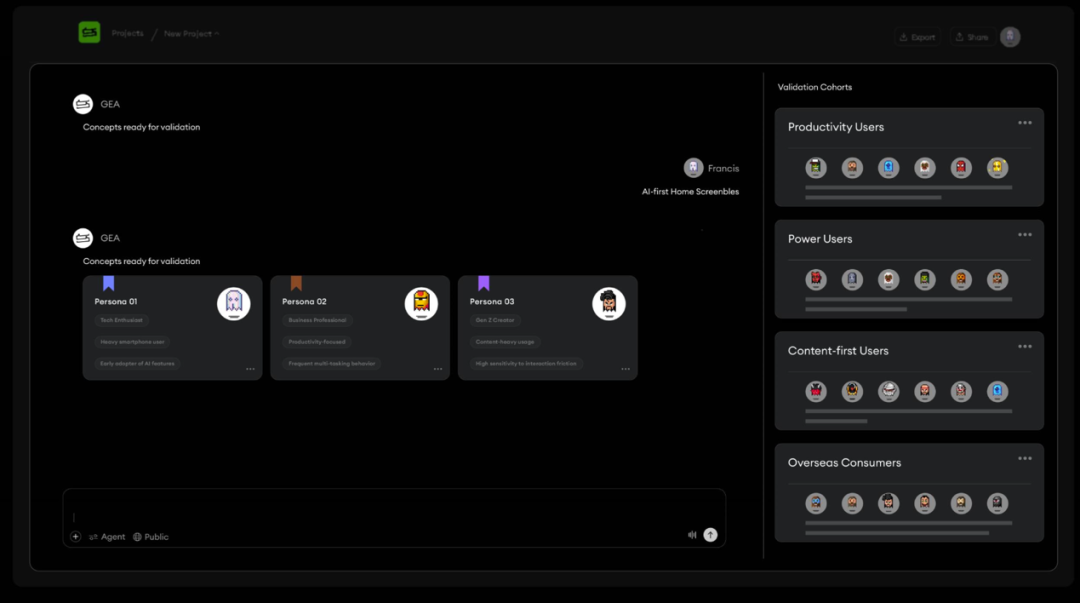

For example, a globally renowned fast-moving consumer goods brand used to take a long time for market research, and reports often became outdated the moment they were released.

Now, the brand has introduced Tezign's “Insight Research GEA.” The intelligent agent has exponentially expanded the sample coverage of traditional research through AI Research capabilities.

More importantly, it is stationed within the enterprise's context, continuously reasoning and actively generating new insights for decision-makers by comparing various new market hypotheses. It provides the enterprise with a sustainable evolving market cognition radar, completely eliminating static PPT reports.

For instance, the marketing side is currently the closest battlefield to AI efficiency improvement. A global electronics 3C brand has fully utilized Tezign's “Content Growth GEA” in its overseas social media campaigns.

Here, the GEA Claw, the proactive execution layer, has demonstrated strong cross-system collaboration capabilities. It is no longer just a tool that writes copy according to instructions but has directly taken over the complete end-to-end closed loop:

From the initial positioning of the account persona, automatic generation of massive viral content, to precise distribution across platforms, and then to effect tracking analysis and dynamic strategy adjustments. It can even coordinate the dissemination nodes of influencer networks to optimize brand visibility in AI-generated answers.

In this scenario, the intelligent agent is no longer an assistant but directly responsible for the final growth results of this cross-product in overseas markets, serving as the “number one position in digital business.”

Clearly, in the wave of Agentic AI, as demonstrated by GEA, four key transitions are occurring: enterprise-level applications are moving from “passive response” to “proactive voice”; from “single function” to “multiple intelligent agents collaborating autonomously”; from “one-time delivery” to “continuous efficiency improvement”; and most importantly, from “generic homogenization” to “extreme understanding of enterprise context.”

The tide of technological evolution cannot be stopped; foundational models refresh the boundaries of cognition every few months. If your enterprise system is tightly bound to a specific model, then with each underlying upgrade, you will face the disaster of starting over.

In the view of the “Chief Digital Officer,” the greatest strategic foresight of the GEA architecture lies in its abstraction of uncontrollable model evolution into stable, operational intelligent assets within the enterprise.

The underlying models can be plugged in and replaced like batteries at any time, but the thick layer in between that understands your enterprise's jargon and contains all your successes and failures—the Context—is the true moat that belongs to the enterprise.

For the past three years, enterprises have been experimenting with models. But starting today, the strategic decisions that truly matter for enterprises are to build an intelligent central system that starts from the highest business intentions, deeply understands its own context, possesses divergent judgment capabilities, and can continuously evolve in real business.

In the second half of AI, the competition is not about who has more money to buy more computing power, but about who can most quickly embed their digital assets and tacit knowledge into the system's memory.

Because in the era of Context, those who understand their business best will be the ones who laugh last in this brutal efficiency revolution.(Source: WeChat Official Account “Chief Digital Officer”)

Category

Media & Press

Date

2026-03-27

Read Time

11 min read

Share Page

Related Recommendations

The Fundamental Logic of Enterprise Software is Transforming: From Seat Subscription to Decision Subscription, the Next Trillion-Dollar Company Belongs to This Type of Player

Enterprise AI Agents Are Hot! Global Disruption in Industries, How Can Domestic Players Break Through?