ChatGPT Turns Everyone into Super Individuals, but Doesn't Make Your Company a Super Organization

In the AI era, the core of corporate competition shifts to contextual density. Enterprise-level intelligent agents support AI-native transformation through a four-layer architecture, driving efficient innovation and business growth. Companies need to let go of past burdens and embrace change.

It is clear that AI has enhanced individual capabilities, even leading to the emergence of new organizational forms like OPCs and one-person companies.

But has the productivity of companies and organizations seen a qualitative improvement? It's hard to say.

The developer efficiency research organization DX tracked 400 companies over 16 months and found that their use of AI tools increased by 65%, while code delivery only rose by less than 10%. The National Bureau of Economic Research (NBER) surveyed about 6,000 corporate executives, and over 80% said AI had no measurable impact on productivity.

AI has indeed made everyone faster, but companies have not become stronger. Where has productivity gone?

Why hasn't advanced productivity led to larger-scale efficiency improvements?

a16z recently published an article titled "Institutional AI vs Individual AI," written by George Sivulka, the founder of their portfolio company Hebbia, which discusses this issue and provides an example from over a hundred years ago.

In the 1890s, factories switched to electric motors, but output remained almost unchanged for the next 30 years. It wasn't until the 1920s that someone tore down old factory buildings and redesigned production lines according to electrical logic that the dividends were released. George Sivulka's statement is straightforward: "We changed the motors, but we haven't redesigned the factories."

130 years later, the same mistake is being repeated.

01 Improved Individual Efficiency Does Not Equal Increased Corporate Gains

Sivulka proposed a succinct judgment: efficient individuals do not equal efficient organizations.

This statement may describe the situation of most companies at this moment. Employees are indeed using AI and are "increasing efficiency," but the organization's revenue, profit, and competitiveness have not significantly improved.

What happened in between? There are at least four systemic dilemmas.

First is the collapse of coordination. Each employee has their own way of using ChatGPT, their own prompt style, and their own output format. The brand messaging written by the marketing department using AI and the functional descriptions written by the product department using AI may be completely different languages. Analyst A's report organized with Claude and Analyst B's report written with GPT are formatted entirely differently. With 100 employees each using AI, no one is thinking about how to align 100 AI workflows.

The MIT Sloan Management Review's research at the end of 2025 pointed out: when AI agents can coordinate workflows, organizations tend to flatten, but the prerequisite is to establish a coordination mechanism first. Most companies have not reached this step. Smart people working hard in different directions is equivalent to standing still.

Then there's the multiplication of noise. AI has made "generation" cost-free, but the cost of distinguishing good from bad has increased. People in the private equity industry say they received 10 deal opportunities last year, and this year 50, with each BP and product introduction meticulously polished by AI. The noise has increased fivefold, but the signal remains unchanged.

Sivulka makes an interesting distinction: personal AI tends to act as a nondeterministic agent, exploratory, always online, able to chat about anything; organizations need deterministic agents, with checkpoints, steps, auditable, and traceable. The latter may not be sexy, but only it can continuously extract signals amid exponentially increasing noise.

The third dilemma: the illusion of productivity. You think you've become faster, but you may not have.

The AI safety research organization METR conducted a randomized controlled experiment where experienced developers completed programming tasks with and without AI tools. The result was that developers using AI were actually 19% slower, but they believed they were 20% faster. There was a 39 percentage point difference between perception and reality.

Will Manidis, founder of the medical AI company ScienceIO, quipped: "The market where people feel productive is several orders of magnitude larger than the market that is truly productive."

What's more troublesome is that organizations cannot digest it. Asana's Work Innovation Lab proposed a concept called "absorption capacity": the value of an organization depends on the slower of two rates, production rate and absorption rate. If individual output increases tenfold, but approval, collaboration, and quality control remain the same, the bottleneck shifts from "not doing enough" to "unable to digest." Output piles up, no one has time to look, and no one has the ability to judge good from bad.

The fourth dilemma is somewhat hidden; the first three discuss how AI hasn't helped, while this one discusses how AI is actively making things worse.

Data shows that LLM exhibits flattery behavior 58% of the time. The more confidently you express your opinion, the more it tends to agree with you unconditionally. When expressed in the first person, the flattery rate increases by an additional 13.6%.

Sivulka places this phenomenon in the organizational context and proposes a somewhat counterintuitive conclusion: "In many companies, the most fervent advocates of AI may be the poorest performing employees." Think about why. An employee who has long received no positive feedback at work suddenly has a "super intelligence" that always agrees with him. He might say to himself: the smartest systems in human history all think I'm right; my manager is wrong. This feeling is addictive and toxic to the organization.

There is a distinction that has been overlooked. The design goal of personal AI is to satisfy the user and encourage renewals. But organizations need reinforcement of facts, regardless of whether the facts make anyone comfortable.

Humans have spent thousands of years building various checks and balances to combat bias and blind obedience: investment committees, independent due diligence, boards of directors, and the separation of powers. The core function is to ensure that not everyone is nodding in agreement. Organizations rarely fail due to a lack of employee confidence; they usually fail because no one can or is willing to say "no."

But these four dilemmas are not the fundamental problem.

Many teams, after integrating AI, have CEOs who consider results based on "how many more deals did we close?" rather than "has everyone become faster?" They are asking about business results. Hidden within this is a fundamental mistake that most companies make when implementing AI: they are using an efficiency framework to think about an effectiveness issue.

Efficiency is about optimizing existing tasks; reports are written faster, minutes are organized faster. Effectiveness is about changing business outcomes; closing more deals, opening new markets. The ceiling of the former is that you can only do the same things faster, while the latter is about enabling the company to do things it couldn't do before.

Even if all four dilemmas are resolved, and efficiency truly increases tenfold, with everyone rapidly producing. If the company's business logic and decision-making methods do not change, and the way they understand the market and users remains the same, a company still won't close more deals.

Deloitte's early 2026 survey confirms this judgment: only 34% of organizations are undergoing deep transformation with AI, while 37% are still at the "changing motors" stage, giving employees AI tools and then waiting for miracles to happen.

02 After changing the "motor", what exactly should be changed?

After discussing so much about "not enough", what should actually be done?

There is a closer story that can help with understanding. When ATMs were invented, everyone thought that bank tellers would disappear. However, from the 1970s to 2010, the number of bank tellers in the United States actually increased. ATMs reduced the operating costs of individual branches, allowing banks to open more branches and hire more tellers for relationship maintenance.

What truly led to a large-scale reduction in tellers was the iPhone. Mobile banking made it unnecessary for customers to visit branches at all. From 2010 to 2022, the number of bank tellers in the United States dropped from 332,000 to 164,000. Bank of America closed 40% of its branches.

Economist David Oks summarized it accurately: ATMs made tasks faster and cheaper within the old paradigm, while the iPhone created a new paradigm where those tasks no longer needed to exist. One automated an old task, while the other eliminated an old task.

Equipping employees with ChatGPT is like adding another ATM in a bank branch. Organizations need to find their iPhone moment.

Returning to the factory owners of the 1920s, what exactly did they change?

First, the processes. The traditional assumption is that humans execute while tools assist. AI-native processes flip this assumption. Goldman Sachs refers to the AI programming company Cognition's Agent Devin as "our new employee", not just a tool for developers, but a direct member of the engineering team. When AI transitions from a tool to an employee, the process design is completely different. You wouldn't assign OKRs to a tool, but you would assign them to an employee.

Sivulka's distinction is precise: one saves time, the other creates revenue.

Secondly, the roles. Managers are beginning to become orchestrators, overseeing not just people, but hybrid teams composed of humans and AI. New roles are emerging, such as AI Agent Manager and Intent Engineer, responsible for supervising the operation of autonomous agents, validating outputs, and translating business objectives into executable instructions for agents. Sivulka states that process engineers are the most important "technology" in recent times. The reason data analysis giant Palantir has maintained a high valuation amidst the tech stock downturn is fundamentally because it is a process engineering company.

Thirdly, decision-making. Organizations need AI that can say "no". Directions include AI investment committee members, AI auditors, and AI compliance officers. The goal of organization-level AI is not to make decisions faster, but to make them better.

Finally, the flow of information. Sivulka's boldest idea is called Unprompted AI, where the system identifies problems before you even ask. "Having humans give instructions to AGI is like connecting an electric motor to an old loom." He provided a scenario: the system continuously monitors portfolio data streams and issues a warning if the operating capital of a portfolio company deteriorates for three consecutive months, even before anyone in the fund opens the relevant PDF file.

These four directions outline a framework. However, there is still a gap between the framework and implementation.

Moreover, it is important to distinguish one thing: the changes discussed here are not just changes in office processes.

In the past two years, a large number of enterprise AI agents have emerged in the market, most of which fall into office scenarios, helping you write emails, summarize meetings, organize documents, and automate approval processes. These are indeed useful and are becoming widespread. However, they still enable people to do the same things faster, following the logic of ATMs.

What enterprises are truly doing every day involves different tasks: understanding the market, defining products, researching users, formulating strategies, and driving growth. These tasks are rarely completed with just one prompt. They require a large amount of information, complex judgments, and collaboration among different roles. These are the real operational scenarios that determine whether a company becomes stronger or weaker.

Improving office efficiency is infrastructure, not the end goal. Even Microsoft, the creator of Copilot, is adjusting its positioning from "helping individual employees improve work efficiency" to "creating tangible business value". Helping people write emails is the starting point; helping enterprises achieve growth is the goal.

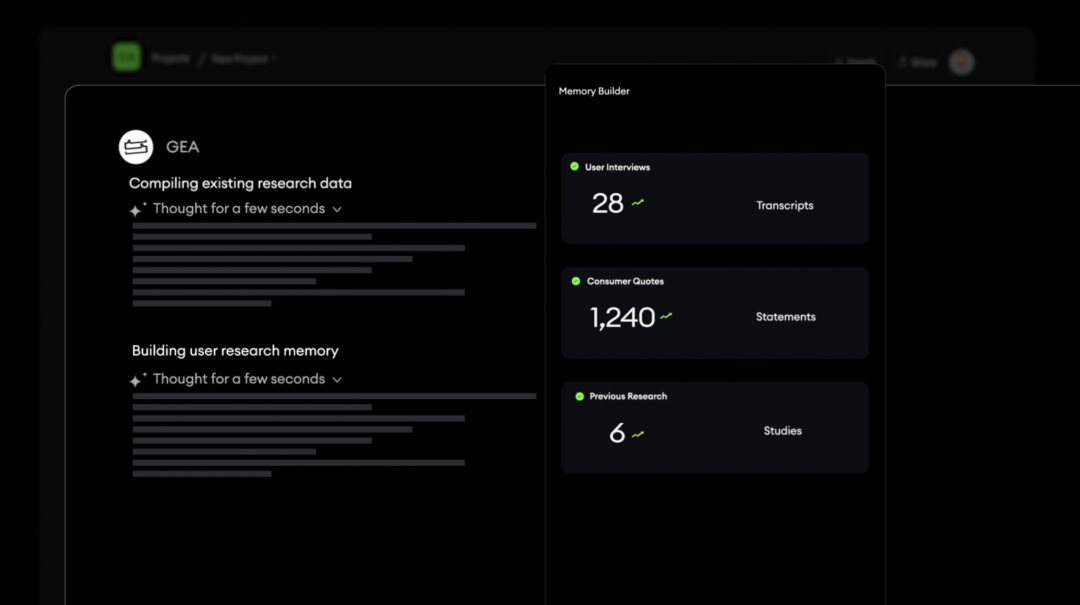

03 Tezign GEA: A Possible Approach to Problem Solving

Is anyone turning ideas into operational systems in this direction?

Globally, there are indeed a number of companies pursuing this path. Hebbia is working as a vertical agent in the financial sector, and clients have already treated custom prompts as intellectual property. Cognition's Devin has been deployed by Goldman Sachs as a "new hire." Palantir helps clients encode business processes into AI systems.

In China, Tezign is one of the furthest along.

Tezign has been in the DAM (Digital Asset Management) space for many years. It may not sound sexy, but that is precisely why it can do GEA. DAM manages the most abundant and hardest-to-understand unstructured data for enterprises: images, copy, videos, 3D models. These are not neatly organized tables in a database; they are the true carriers of corporate marketing, products, and internal knowledge. Tezign has served over 200 global enterprises and has accumulated considerable understanding of the deep structure of this data.

The AI era has unleashed a capability that did not exist before: understanding unstructured data. Machines could previously only store a poster; now they can comprehend the brand tone behind it. They could only store a project document; now they can extract the decision-making logic. Tezign's founder and CEO, Fan Ling, said at the launch event: "Unstructured data has transformed from 'stored files' to 'understood context'"

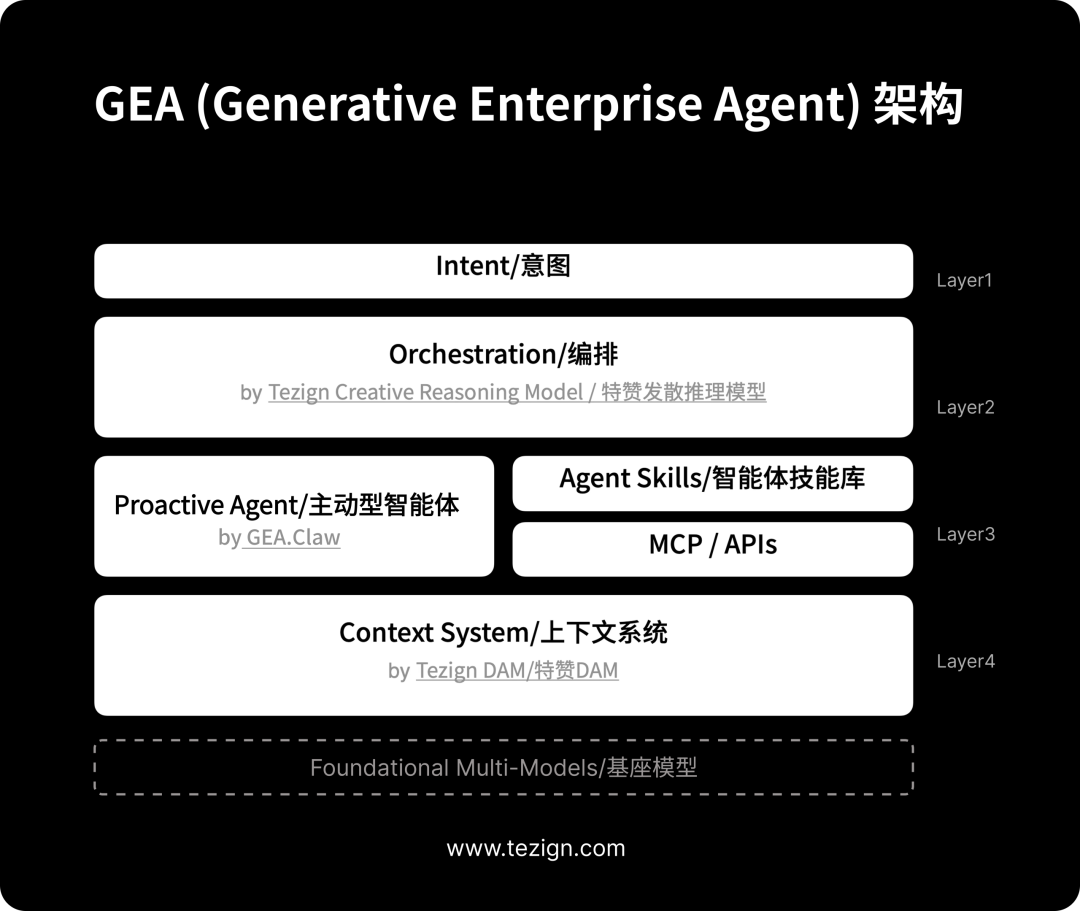

Building on DAM, Tezign developed the Context System, and then on that basis, they created GEA. The path is natural: starting from managing content assets, gradually turning these assets into contexts that AI can understand, ultimately allowing AI to drive business based on context.

There is a subtle detail that is not easy to notice. The "brain" of GEA is the Creative Reasoning Model, which has a unique training method. Tezign gathers experts who have actually made business decisions on the platform—strategy consultants, creative directors, brand experts, market researchers—to annotate not the correctness of answers, but the value of the thinking paths: which directions are worth exploring, what possibilities are easily overlooked, and which assumptions need to be questioned. Fan Ling explains this by saying: "In the real business world, the value of these questions lies not in convergence, but in how many possibilities you can see." This training method gives GEA's reasoning a sense of business judgment, rather than just being generally "smart."

Recently, Tezign launched GEA (Generative Enterprise Agent)—an enterprise-level intelligent agent system. At the launch event, there was a statement that almost perfectly aligned with Sivulka's judgment:

AI can do many things. But the real way businesses work has not changed. What businesses truly need is not more AI tools. What they need is a new system.

The goal of GEA, in Fan Ling's words, is: "To make AI not just answer questions, but to truly participate in the work of the enterprise. Understand goals, organize capabilities, and continuously drive results."

Before introducing specific capabilities, let’s first discuss the foundation.

Context is the foundation

Fan Ling repeatedly returned to a judgment at the press conference: "When everyone can use the same model, the model is no longer a barrier."

He compared the model to electricity: "The model is public infrastructure, just like electricity. No company wins the market because 'the electricity we use is better.' GPT, Claude, Gemini, DeepSeek, Qwen, you can use it, and your competitors can use it too."

So what is the barrier?

"Context. Context."

The same model, given public information, produces a generic answer. Give it your company's unique context, and it produces an answer that only you can obtain. "The model generates intelligence, and context generates value."

It's not just Tzeng that thinks this way. In March 2026, Stanford University professor and DeepLearning.AI founder Andrew Ng released the open-source project ContextHub, addressing a nearly identical problem: even the strongest models will produce unreliable results without the correct context. He gave a straightforward example: when he asked the current strongest programming model to call a new API, the model used an outdated old interface. It's not that the model isn't smart enough; it's that it lacks the correct context. His conclusion is that context management is the infrastructure for agents to continuously improve, not an additional feature.

Andrew Ng builds context infrastructure for coding agents, while Tzeng builds context infrastructure for business agents. The underlying logic is the same.

For businesses, "context" is far more complex than technical documentation. Tzeng's Context System manages not RAG-style document fragments, but the complete business context behind enterprise content: from brand assets to project accumulation to audience profiling, seven dimensions constitute a panoramic view of a company's context.

Where this material comes from, why it was generated, under what business objectives it has been used, and how effective it was—this information was originally scattered in personal experiences, meeting discussions, and email exchanges, representing typical tacit knowledge. The Context System structures them into context that can be called by agents. Fan Ling's positioning on this is: "If DAM is the only source of truth for enterprise content, then the Context System is the only source of truth for enterprise context."

There’s an even stronger statement: "Without this layer, all the intelligence above is a house of cards."

The reasoning is not complicated. An agent without context is like a new employee who just joined and knows nothing about the company; no matter how smart, it can only perform generic tasks. An agent with enterprise context is more like a seasoned employee who deeply understands the company's business and historical decisions, with every judgment built on accumulation.

There is a set of data that illustrates this gap. The same enterprise content assets were called upon 12 times for every thousand materials in the past. After deploying the Context System, they were called upon by agents over 23,000 times. From 12 to 23,000, the same content had a utilization difference of nearly 2000 times.

Fan Ling explains this number: "This 2000 times is not because agents are doing repetitive tasks, but because agents are doing things that humans cannot do; they continuously discover connections, extract insights, and drive decisions from your context 24/7."

The upper limit of an agent's capabilities does not depend on how smart the model is, but on how much correct context it can call upon.

On this foundation, there are many more things

Understanding the position of the Context System makes it clear to see GEA's other capabilities.

GEA does not allow everyone to call the model individually, but instead orchestrates it uniformly through an Orchestration layer, driven by the Creative Reasoning Model. Fan Ling distinguished between traditional reasoning and divergent reasoning: "Traditional reasoning models converge to a definite answer step by step when faced with complex problems. The Creative Reasoning Model exhausts all possibilities before finding a definite answer. First diverge, then converge." This orchestration layer breaks down a business intent into multiple possible execution paths, evaluates the value of each path, and routes sub-tasks to the most suitable models and tools. There are over 30 foundational models at the bottom, specializing in reasoning, generation, vision, and data, each excelling in different tasks. He likens it to: "It is a conductor, not a soloist."

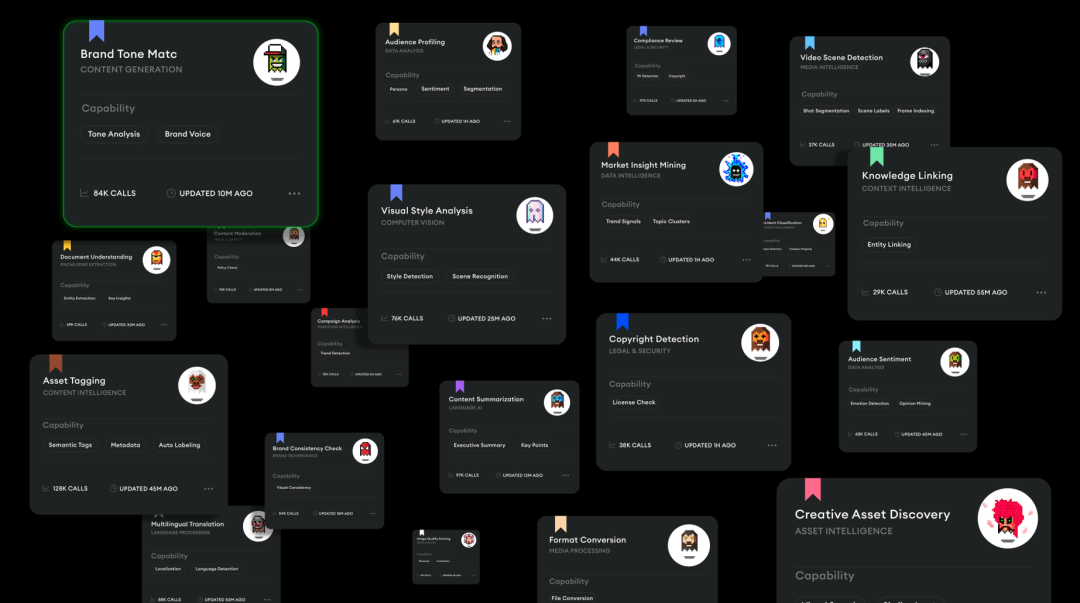

The execution end is not a single Agent dominating everything, but rather an Agent Team, with specialized Agents collaborating on brand tone, user analysis, and compliance review. These Agents can perform their respective roles while maintaining consistency because they share the same brand language, user profiles, and business rules within the Context System.

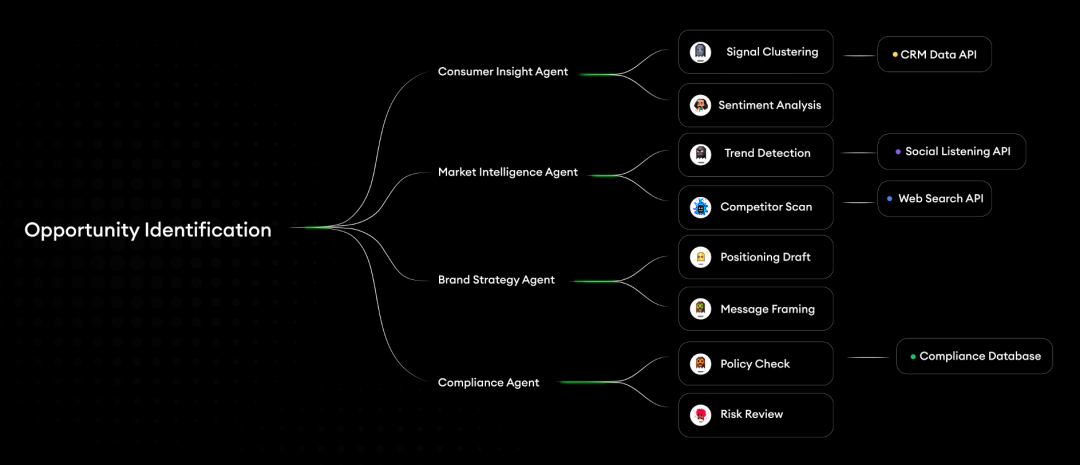

You don’t need to tell GEA what to do; you just need to tell it what you want. A business intention is not a prompt. "Help me find growth opportunities in the Southeast Asian market for the next quarter." "Optimize product positioning based on last month's user feedback." The system starts from the intention, orchestrates and breaks it down, executes with multiple Agents, continuously delivers results, and feeds back for optimization.

Fan Ling said at the press conference: "You give GEA an intention. It understands you through context, breaks down the problem with judgment, executes tasks using the best models, and then proactively and continuously delivers results."

GEA also features a Proactive Agent design, an OpenClaw running within the GEA system, which does not wait for inquiries but actively monitors data, identifies anomalies, and adjusts deviations. Fan Ling summarized this as one of GEA's four leaps: "From passive response to proactive voice." Coupled with brand compliance verification and quality review modules among over 400 Agent Skills, the system also has a balancing mechanism. This directly addresses the previously discussed issue of "judgment erosion": organizations need AI that dares to say, "There is a problem here."

GEA product official website: https://www.tezign.com/contact?channel=GEA

04 Which scenarios will be implemented first?

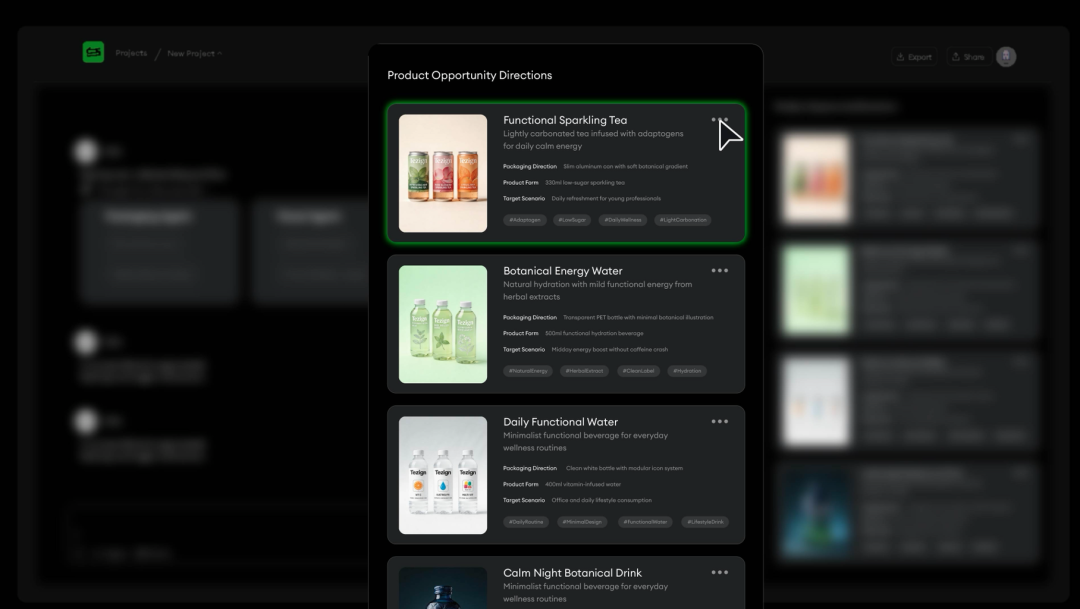

Having discussed the architecture, let’s look at practical applications. GEA is currently implemented in four scenarios: insight research, content growth, product innovation, and design creation. We will focus on the first two.

Insight Research

Traditional user research is project-based. Initiation, recruitment, interviews, analysis, reporting—a cycle takes at least a month, or even a quarter. By the time the report is out, the market may have already changed. What’s more troublesome is that insights from each research are scattered across PPTs and personal notes, requiring a fresh start each time. A statement at the press conference pinpointed the fundamental issue: "Data records behavior, but what truly drives behavior is often the subjective world of people. Companies can see what users are doing, but it’s hard to understand why they are doing it."

GEA transforms user research into a continuously operating system, divided into three layers.

The first layer is user modeling. It turns existing interview records, user expressions, and historical research into reusable user models. Labels like "women aged 25-35" are almost useless in real business decisions. GEA builds AI Personas based on real data: you can directly converse with it, probing into purchase motivations, decision concerns, and usage scenarios.

A global food company is creating chocolate gift boxes for the Spring Festival and needs to understand the emotional logic of consumers when giving gifts—not just whether the "taste is good," but rather "what is appropriate to give, in what scenario, and to whom." The traditional approach would be to conduct another round of focus groups, starting from two weeks. GEA constructs AI Personas from existing historical data, and the team can directly ask it: "In what scenarios would you choose chocolate as a gift? Which is more important, price or brand?" Product decisions shift from waiting for research to real-time dialogue.

At the same time, the system builds AI Sage (virtual domain experts), providing in-depth industry perspectives. All information automatically flows back into the Context System, becoming a continuously accumulated user asset for the company, rather than a series of PPTs forgotten in shared drives.

The second layer is decision simulation. When a product direction needs to be validated, GEA organizes an AI Panel, allowing AI Personas from different backgrounds to sit together and discuss, exposing conflict points in the decision-making process. Then, through AI Interview, a one-on-one deep dive is conducted.

A power tool brand needs to understand the decision-making logic of "Prosumers" (professional-grade consumers) when choosing professional equipment. The AI Panel simulated discussions among three roles: renovation workers, DIY enthusiasts, and small contractors, discovering that the differentiation in price sensitivity far exceeded the team's expectations. Renovation workers hardly consider price, focusing instead on durability; DIY enthusiasts are extremely sensitive to cost-performance ratio; small contractors prioritize after-sales response speed. These differentiations are difficult to reveal in traditional survey methods.

The third layer is behavioral prediction. AI Research and Universal Research Agent continuously observe user expressions, market signals, and behavioral changes, producing trend judgments and decision recommendations. The results are consolidated into an Artifact, becoming a research asset that can be repeatedly accessed.

In simple terms, user research is no longer a "project" but an ongoing capability. The understanding of users by enterprises has shifted from snapshots to live broadcasts.

Content Growth

The past approach to social media growth was campaign-based: plan a wave of activities, launch a batch of content, review a set of data, and then start the next campaign from scratch. GEA is involved in the complete link from trend insights to content strategy to influencer collaboration and then to growth optimization.

Here are a few specifics. A global jewelry brand found that content on "daily wear" on Xiaohongshu was continuously increasing. The team's concern was not the trend itself, but: is this a platform trend or an opportunity for our brand? GEA observed platform trends through AI Research while using the Context System to compare brand positioning, historical data, and user profiles. The output is a content direction judged in conjunction with the brand's own context, not a generic list of trending topics.

In terms of influencer management, a high-end liquor brand needs to manage hundreds of creators across KOLs, KOCs, and KOSs. GEA's Proactive Agent continuously monitors the communication rhythm, proactively reminding the team to expand creator collaborations in a direction that is rapidly growing. Agent Skills participate in content review and brand tone checks. Communication management has transformed from a static list of influencers to a dynamically coordinated network.

Another noteworthy new concept is GEO. As more users directly ask AI, "Which new energy vehicle is suitable for family use?" whether the brand is mentioned in AI's answers becomes a new competitive edge. SEO optimizes webpage rankings, while GEO optimizes brand visibility in AI-generated answers. GEA continuously monitors brand mentions in AI answers through Proactive Agent.

Product Innovation and Design Creation

GEA's scene coverage goes beyond this.

In terms of product innovation, GEA participates in the entire chain from signal insights to concept exploration to user validation. A PC manufacturer launches dozens of product lines each year, and while there is no shortage of signals, the challenge lies in determining which signals are worth pursuing. GEA integrates external public data, user reviews, and internal product data through cross-validation, turning scattered information into validated product opportunities. When a food brand is developing new flavors, multiple agents simultaneously explore flavor combinations, packaging designs, and product naming, resulting in deliverable visual outputs.

In terms of brand design, GEA incorporates brand specifications, design systems, and historical materials into the Context System, ensuring that the design solutions produced by Agents are within the framework of brand tone. Brand compliance checks have shifted from manual reviews to system automatic verification.

The commonality among the four scenarios is that they are all built on the Context System and are ongoing systems, not projects that end after a single completion. It is a change in the way work is done.

05 Individual Awakening Remains the Key Foundation

Of course, this is just one way to approach the problem.

GEA is a productized response to "organizational redesign," but it is not the only one. Different companies in various fields are moving in similar directions, which precisely indicates that the trend itself is real, not just a marketing narrative from any one company.

However, individual awakening remains the foundation. Data from Asana shows that 10% of "super producers" save more than 20 hours a week through AI. These individuals are more experienced, cross-functional, and view AI as a teammate rather than a tool. Many of them have indeed achieved a certain level of "human-AI integration."

The problem is that these 10% of super producers have not turned their companies into super companies.

Individual awakening cannot solve coordination issues. No matter how well one person uses it, if ten people in a team use it differently, the output will still be fragmented. "Human-AI integration" is the foundation, while "organizational redesign" is the superstructure. Without the former, the latter is mere talk; only with the former will the latter not happen automatically.

Sivulka has a dual judgment: the future is not just ChatGPT/Claude or vertical solutions, but the coexistence of both. General large models serve as the foundation for individual productivity, while vertical AI provides organizational-level intelligence. Just like a smart analyst will use ChatGPT for brainstorming but will use Hebbia for real due diligence. The former helps him think, while the latter aids organizational decision-making.

To be frank: Proactive Agent is still closer to the vision than to reality today. Most enterprises haven't even utilized prompted AI effectively. The 2000-fold usage data of GEA comes from the content management field where Tezign excels; whether this can be replicated in other fields will take time.

The bigger challenge is change management itself. Sivulka states that Palantir's core delivery is not code, but frontline deployment and change management. Technical products are only half of organizational redesign; the other half is driving behavioral change in people.

This half is often more difficult.

06 Is Your Organization Designed for AI Today?

Let's go back to the textile factories over a hundred years ago.

In the 1920s, the factories that ultimately reaped the benefits of electrification were not the ones that installed electric motors first, but those that were the earliest to dismantle old buildings and redesign the entire production line.

Today's situation is exactly the same. AI is already connected. Models are breaking through, tools are iterating, and costs have come down. But most organizations are still in the old factories replacing motors, handing out AI accounts to employees, and expecting performance to improve automatically.

The real question is not "Are your employees using AI?" But whether your processes have been redesigned around AI's capabilities, whether roles have integrated AI agents as part of the team, and whether there are checks and balances for AI in the decision-making mechanism. More fundamentally: is your enterprise context, that implicit knowledge scattered across personal experiences and emails, waiting to be lost, or has it already become a system asset that agents can call upon?

Fan Ling said at the end of the press conference: "In the past decade, companies bought software. In the past three years, companies experimented with models. Starting today, companies need an intelligent system that understands your context, possesses judgment, and continuously evolves."

It's time to dismantle the old factory.

GEA Product Official Website: https://www.tezign.com/contact?channel=GEA

Category

Media & Press

Date

2026-03-28

Read Time

24 min read

Share Page

Related Recommendations

Conversation with Fan Ling of Tezign: I Personally "Killed" My Former Self In the AI Era, All Attachment Is a Burden

Mosu Space Member Releases Enterprise-Level Intelligent Agent GEA, Serving Over 180 Global Enterprises